AWS for beginners - S3

Let’s say that we want to operate an application where lots of images (Pinterest) or videos (Netflix) are needed. We don’t even have to go that far though, a static website with our product will be just enough.

We obviously don’t want to maintain our own server, and pay for the hardware, operating system, cables, cords and security. Instead, we want to store our data in the cloud.

One option is to use S3 from AWS.

1. What is S3?

S3 stands for Simple Storage Service, and it’s the storage for the internet. We can upload and retrieve any data (images, videos, static websites etc.) from anywhere in the world if we have the permission to do so.

S3 - depending on the storage class - is highly available with 99.9% - 99.99% per year availability, which means that data can’t be access for maximum a few minutes per year.

Our data are stored in various locations within a region, and this results in eleven 9’s durability (99.999999999%, again, depending on the storage class). This figure basically means that AWS guarantees that our data will be there with that much probability. If we subtract this number from 100%, we will get the probability of AWS losing our data.

The latter number is extremely small; it’s almost zero, so it’s highly unlikely that this event will occur.

2. S3 vs databases

S3 should not be confused with databases. AWS has managed database solutions, like RDS for relational databases (e.g. MySQL or PostgreSQL) or DocumentDB for NoSQL databases with MongoDB compatibility. Or, if someone is really into adventures and likes managing things by themselves, they can install their database engine on an EC2 instance. These databases are for storing data, like name, address, pet and favourite colour of people.

S3 is an object storage service for files, and it’s more like an external hard drive or Dropbox with some key differences in how it works.

3. How does S3 work?

S3 is an object storage system, and as such, data is stored as a whole, including metadata.

3.1. Buckets, objects and naming

The building blocks of S3 are called bucket and object. Buckets are containers for data files similarly to the folders in our computer, and objects (file, data) are stored inside buckets.

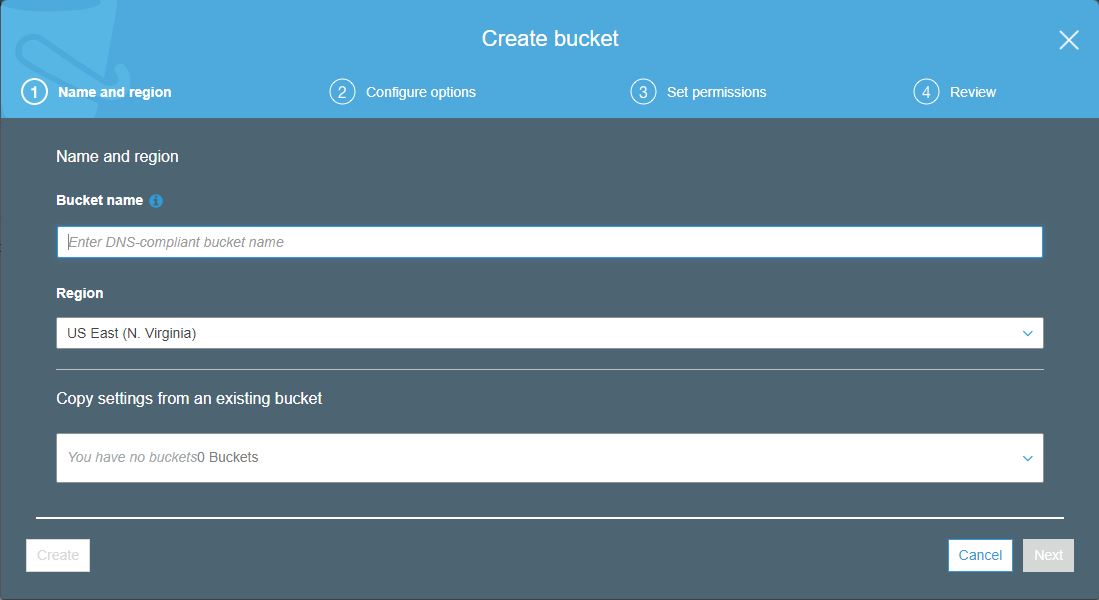

Buckets can be created in various ways. The picture below shows a snapshot of the S3 section in the console.

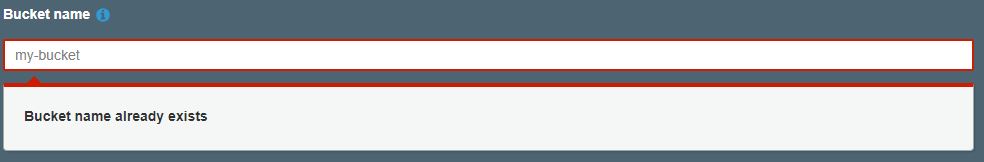

As it can be seen, the bucket will need a name. This name must be unique in the whole AWS world, i.e. no two AWS accounts can create a bucket with the same name. As less creative names go quickly, this rule will make the selection of my-bucket as a bucket name impossible, unless the owner of this bucket deletes it.

There are other rules for naming, like it must start and end in a small letter or it cannot contain invalid characters (e.g. _), but the most important of all is the uniqueness of names.

3.2. Buckets are flat containers

Buckets are flat in the sense that Russian doll-like buckets don’t exist, i.e. we can’t have a bucket inside another bucket. Only objects can be placed inside buckets.

We can have unlimited number of objects but the size of one object can’t exceed 5 TB. That’s not bad at all.

One account can have up to 100 buckets, but if more needed, the account owner can submit a service limit increase request to AWS.

3.3. Buckets are region specific

We also have to select a region.

S3 buckets (and the objects stored inside) are region specific, and the files will be stored in several different locations within the region, hence the S3’s famous durability indicator.

But selecting a region where our files are stored doesn’t mean that they are not available from outside that region.

As it was mentioned above, it’s possible to store static websites on S3, and it would be really sad if only users from that region could access the site.

However, it’s a good idea to select a region which is close to the users, so that response time can be decreased when files are accessed or uploaded.

3.4. Permissions

Buckets and objects come with permissions. When a bucket is created or a file (object) is uploaded, we can (and should) set permissions on them.

This means that we can decide who can do what with our files. Can everyone read our files? Or just a restricted (say, authenticated) group of users can access the data? Can they modify or delete them?

As stated above, S3 can store static websites. It’s a good idea to give read access to everyone, else people won’t be able to read our site and we can’t sell them our product.

On the other hand, it can happen that we might only want to share data with our subscribers. In this case, we give read permission to only a small group of users, those who are already authenticated (i.e. paid the big bucks and subscribed).

Permissions are based on policies and access control lists, and they are rather difficult. If the reader is interested, they can read more about policies and ACLs in this great blog post on the AWS website.

3.5. Accessing objects

Objects are accessed through HTTP requests therefore each object has a unique URL.

This is why the name of the objects has to be unique. If it wasn’t like that, one URL would provide access to multiple objects, and this would lead to ambiguity and confusion.

When a GET request is made to the URL of the object, it’s the latest version of the object which is fetched, unless versioning is enabled and the version is specified.

If the requested object is found on S3, which is highly likely unless it has been deleted, S3 will respond with a 200 status code (given that we have been granted permission to access the object). In case something goes wrong, an error code is returned. The full list of error codes and when they are returned can be found here.

4. Conclusion

S3 is one of the core services AWS offer. It’s the storage of the internet, where files, images, videos or even static websites can be stored.

The primary resources of S3 are the buckets and the objects. Buckets are containers, and objects are the data we want to store on S3.

Not everyone can access the objects. Permissions can be given to users and other accounts to read, write, update or delete objects, which occur through HTTP requests.

Thanks for reading and see you next time.