Elastic Container Service for beginners - Part 1

Amazon Web Services (AWS) keeps up with the current trends, and provides a service for our containerized applications. This service is called Amazon Elastic Container Service, or ECS.

1. What is ECS?

ECS is a container management service, which supports Docker containers, therefore it provides a platform for our container-based applications without us having to manage the containers.

1.1. No container management

There’s no need to orchestrate the containers, because ECS does it all for us. It starts and stops containers, and if one container goes down (for example, due to the failure of the underlying instance), it automatically starts a new container.

1.2. High availability

ECS is a regional service, which means that we can launch our containers in each region, and they will be separate entities. It’s also highly available, and the containers can run in multiple availability zones (AZ). This architecture makes the containerized application more robust, because it’ll be still available even if one AZ (a geographic location where the underlying server runs) goes down.

1.3. It can be managed

We can also decide on the level we want to manage the underlying infrastructure in the form of launch types.

Containers run on normal EC2 instances, and they have some special software (ECS agent and Docker) installed on them which makes it possible to manage Docker containers.

If we choose to have more control over the instances, we can choose the EC2 launch type. In this case, we need to provision the instances, but in return, we will have more control over them.

The Fargate launch type is managed by AWS, and all we have to do is to register the task definition for the containers (more on that below), and Fargate will launch them.

2. Main parts of the ecosystem

ECS is great, but it’s easy to get confused about how to set it up, because it’s not straightforward. The next few sections are supposed to clarify the most important concepts for launching containerized applications in ECS.

2.1. Repository

Since we work with containers, ECS needs to know where the image is located from which the container will be started.

This can be Docker Hub to keep Docker-related services in one basket, or it can be AWS’s own container registry called ECR. AWS hosts the images in a highly available (i.e. redundant) environment, and we only need to pay for the storage we use.

ECR works well with continuous integration, and updates in the application code can be easily transferred to ECR and our application in ECS.

2.2. Task Definition

The Task Definition, as the official description states, is the blueprint for the containers. It contains all the necessary information about the containers.

Task Definitions are similar concept to Docker Compose.

In the docker-compose.yml file, we define the image, environment variables, volumes, ports, network settings etc., all of which are necessary for building the container. Task Definitions serve the same purpose in ECS.

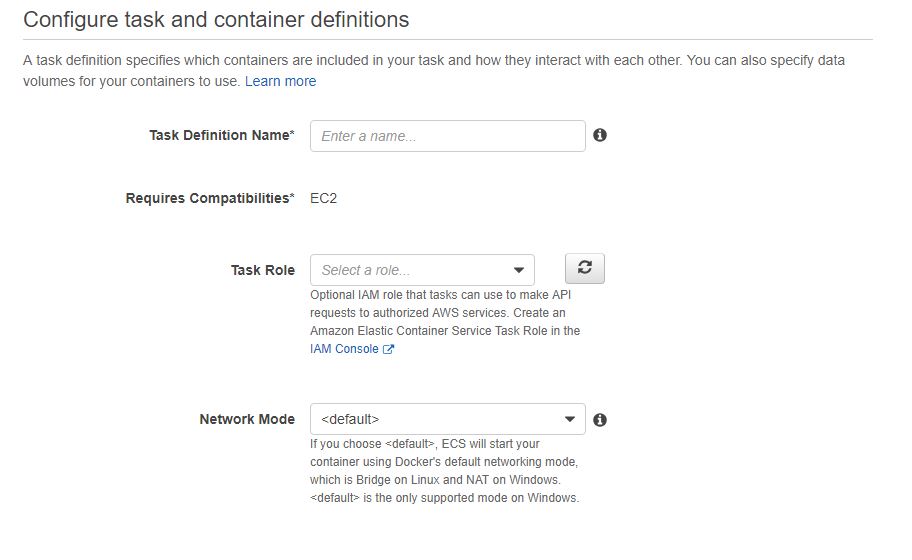

Firstly, when we create a new Task Definition, we have to specify the launch type (EC2 or Fargate), give it a name, define the network mode (e.g. bridge) and add containers.

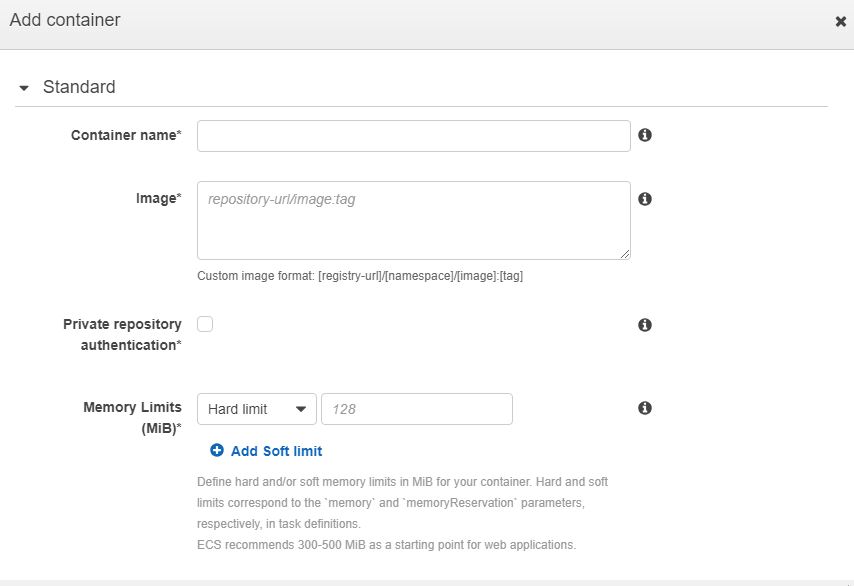

When we add containers to the Task Definition, we’ll need to refer to the URL of the repository (Docker Hub or ECR, see above), can define port mappings (in host:container order, similarly to the docker run command), environment variables, network settings and much more.

When it’s done, we’ll have everything container related stuff set up, but the containers won’t run that time yet, because we haven’t created the environment and the underlying infrastructure.

2.3. Cluster

The cluster will provide the infrastructure for the containers.

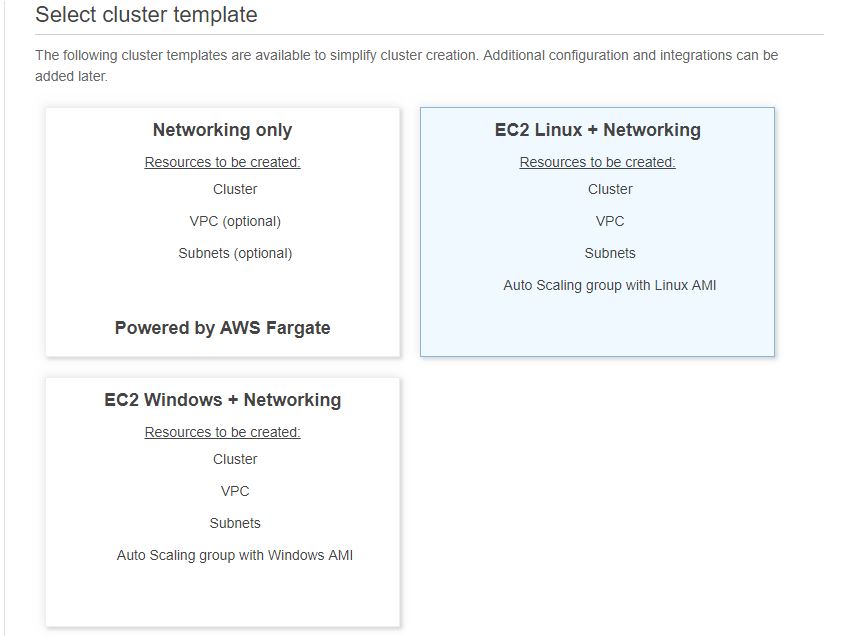

First, we decide if we want Fargate or EC2 launch type, and if we go with EC2, what operating system we want (Linux or Windows) the containers to run on.

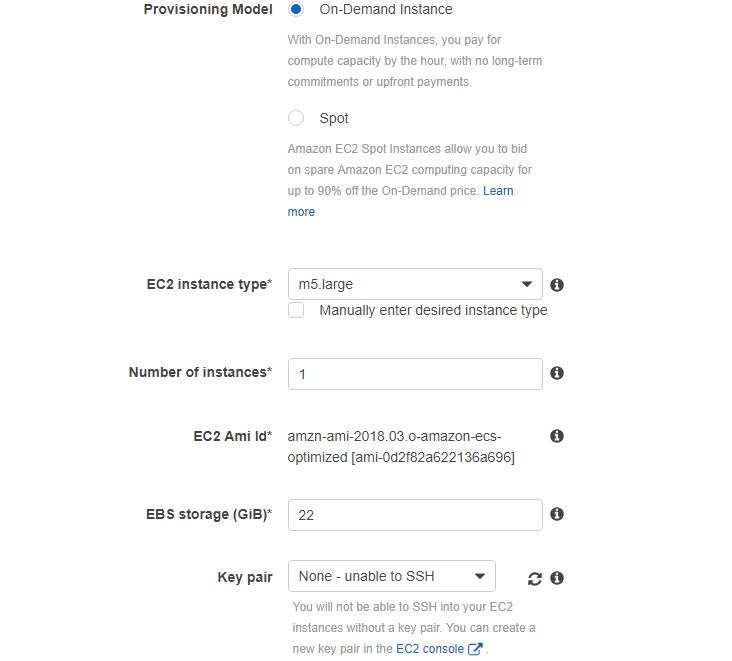

If we choose more control (the EC2 launch type), AWS will offer us lots of options.

We can choose the instance type (t2.micro is free), how many instances we want to launch (at least two if we want a highly available architecture) and which keypair to use if we want to SSH into the underlying instance.

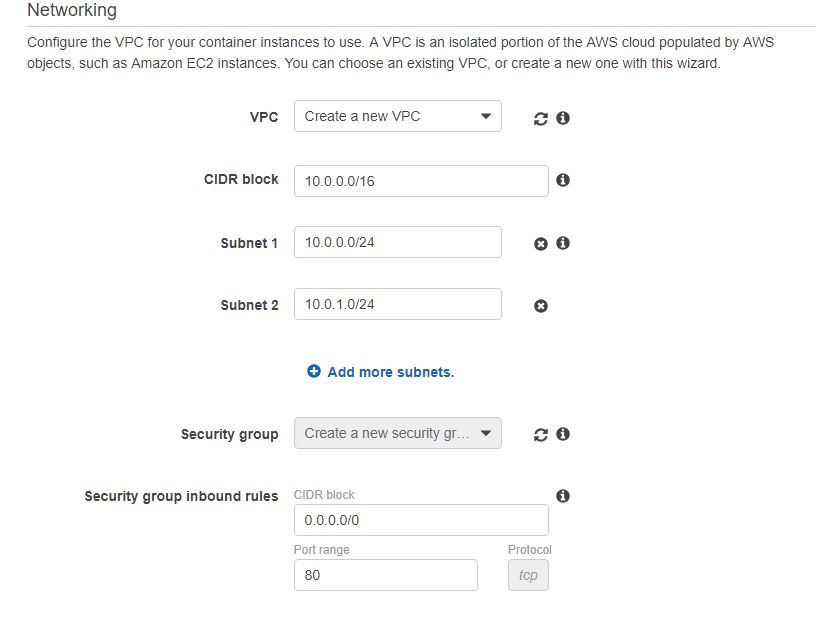

We can also choose if we want to create a new VPC for the container instances or use an existing one, and specify the security group settings.

AWS will create the whole stack of these building blocks for us, which is really cool!

2.4. Service

The containers are still not running yet. In order to start the containers, we’ll need to create a service inside a cluster.

The service connects the Task Definition to the cluster by launching the tasks. We can define how many tasks (container replicas) we want to run.

For example, if we chose to have two instances when we defined the cluster in the last step, and now we initiate two tasks, ECS will evenly distribute these tasks over the instances, i.e. both underlying EC2 servers will have one set (replica) of containers.

If we launch four tasks, then two replicas of each container will be started on each instance.

If we want different number of replicas for different containers (for example, three replicas of the order module but only two of the products), we’ll need to define separate task definitions for them.

When the service is created, the containers will finally run, and our application will be available.

3. Conclusion

ECS is a great service to launch containerized applications.

Setting up the service is not easy as it consists of multiple steps, such as creating Task Definitions, clusters and services.

In Part 2 of the ECS article series, I’ll launch a containerized application to AWS ECS.

Thanks for reading, and see you next time.